Secondtruth Blog Archives

-

Post Mortem – Running The Tomb of Annihilation

This is a post mortem about my experience running the 5th edition D&D module Tomb of Annihilation. If you’re a player who may experience this as a campaign, don’t read this post, since it will contain spoilers for the module. If you are a DM who is considering running the module, you might find this…

-

A Cockail for Braid

Have a Super Mar 10! For Mario March I am creating Mario-inspired food and drink. I’m going to be playing Braid today. In the spirit of The Drunken Moogle, I thought it would be nice to create a cocktail to celebrate this game, and drink while I play. Some game and geek cocktails only mimic…

-

Celebrating Mario March

Hi Everyone! Have you been watching my Twitch stream? No? Well, it’s at http://www.twitch.com/litagemini This is a really good place to find me lately, since I’m trying to get into a habit of streaming more often. I’ve gotten involved with an awesome group of streamers all locally in Philadelphia, and it’s a blast playing games…

-

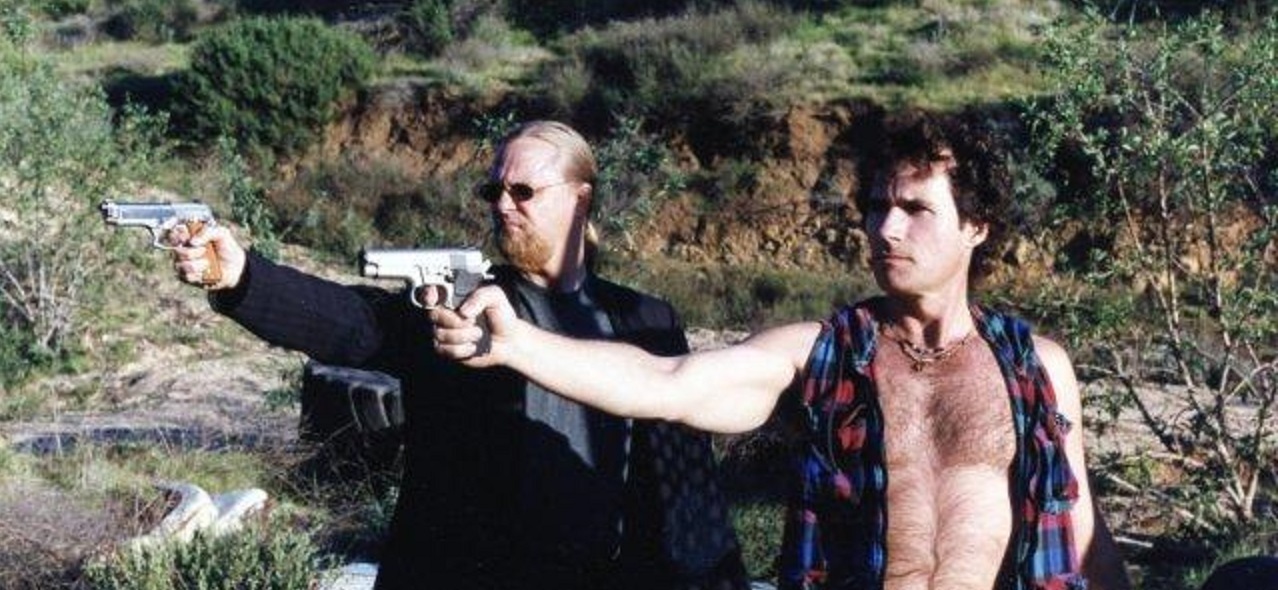

The Unintentional Tech Wisdom of Scott Shaw

There’s a B movie I’m really fond of called Guns of El Chupacabra. If you’ve hung out with me and my husband for long enough, you’re probably familiar with the movie. We discovered it through the Smithee Awards ceremony at Origins Gaming Convention, and have been sharing it with people for years. The hero of…

-

Writing Roundup

This blog hasn’t been too busy lately, I know. But if you’re interested, I’ve been posting a lot of articles that I was sitting on for a while on the games blog I write for, Tap-Repeatedly.com— I Play Fighting Games for the Story – about the story mode in Street Fighter V and why it…

-

What Is a Technical Evangelist?

I get this question a lot! My co-worker, Dave Voyles, made a video about it a while ago, but I thought I’d make one from my perspective, after three years on the job. Thanks for watching!

-

Catch me on Twitch!

Cross-posted from Tap-Repeatedly.com: I can’t always find time to write long essays about games. I’m often busy playing them! I figured I’d go ahead and share that gameplay with all of you, so I re-invigorated my Twitch stream. I haven’t streamed since Extra Life several years ago, so I’m shaking off the dust. Check my…

-

Making Makoto’s Black Dress

This last weekend I went to the Sailor Moon Silver Millennium Masquerade Ball! It was an event in celebration of the 25th anniversary of Sailor Moon, with formal costumes, drinks, dancing, and moonlight. We enjoyed the event very much. But what does someone wear to something like this? Well… I wanted to do a “canon”…

-

Making a Connection Between Unity 3D and Azure IoT Hub

This is a technical blog post about a problem and solution that I encountered with Unity 3D and Azure. Hopefully it will be of use to someone out there.

-

What Is: Microsoft Cognitive Services

Lately I’ve taken an interest in Microsoft’s Cognitive Services suite, and given some talks about Cognitive Services around the country. Since it makes sense to take some notes on this process, I’m going to write a couple of blog entries going through some of the services available and some sample code for people who want…

Got any book recommendations?